Disclosure: This analysis was performed by BackBox AI comparing its own results against tools evaluated in ProjectDiscovery's published "Vibe Coding" research. The comparison relies on PD's published summary data, not their full methodology or raw output. PD's study was designed as research into AI-assisted vulnerability discovery patterns, not as a formal competitive benchmark. Finding counts are influenced by decomposition approach (see Methodology Notes below).

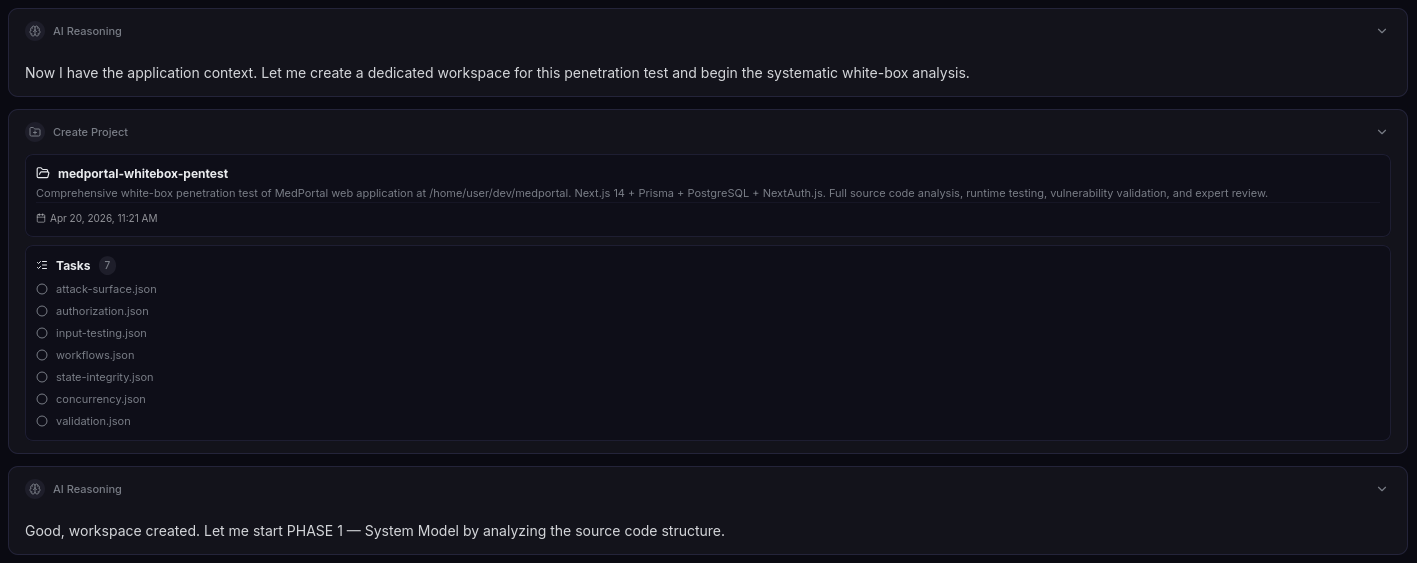

1. Overview

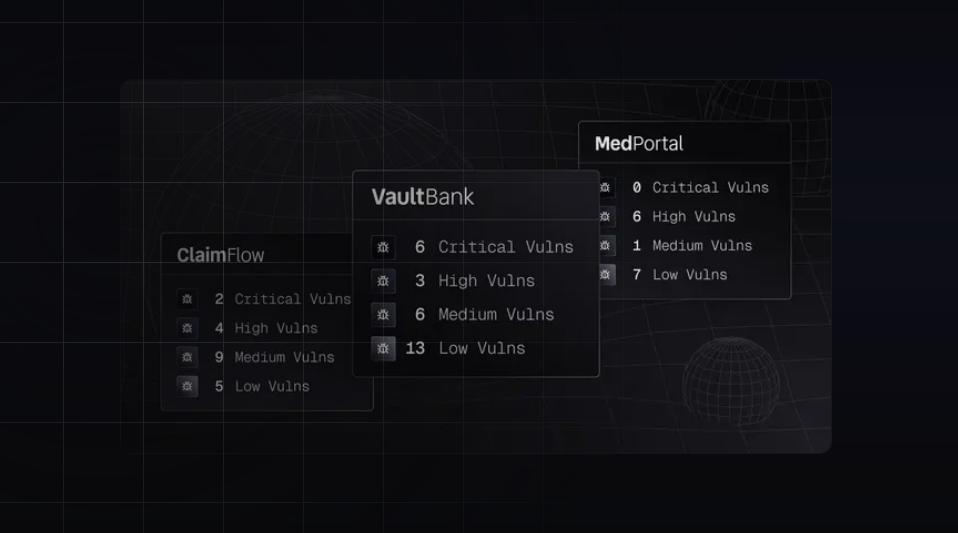

This analysis compares BackBox AI's penetration test findings against the results published by ProjectDiscovery in their "Vibe Coding" research article. PD's article evaluated five tools — Neo (their proprietary AI agent), Claude (Anthropic), Snyk, Invicti, and Semgrep — against three intentionally vulnerable applications. We focus exclusively on the MedPortal application.

Key Figures

| Metric | ProjectDiscovery (Neo+Claude) | BackBox AI |

|---|---|---|

| Total Valid Findings (all severities) | 20 | 17 |

| Valid Findings (Medium+ only) | 7 | 15 (raw), ~11 (normalized by vuln class) |

| High+ Severity | 7 | 10 |

| High (individual findings) | 7 | 10 |

| Critical (chained scenario) | 0 | 1 (CVSS 9.5+ chain) |

| Medium | 1 | 5 |

| Low (excluded) | 7 | 2 |

| Info (excluded) | 6 | 0 |

| False Positives (self-assessed) | 0 | 0 |

Methodology Notes

Granularity approach: BackBox AI decomposes findings by individual endpoint and resource type (e.g., BOLA on patients, BOLA on appointments, BOLA on referrals as three separate findings). ProjectDiscovery consolidates by vulnerability class (e.g., one "BOLA/IDOR" finding covering all endpoints). This yields different raw counts even when the same underlying vulnerabilities are identified.

Normalized comparison: When findings are grouped by vulnerability class rather than endpoint, BackBox AI covers approximately 11 distinct vulnerability classes versus 5 for the best tools in PD's study. The normalized count still demonstrates broader coverage but is less dramatic than the raw 15 vs. 7 headline.

2. Detailed Finding-by-Finding Comparison (Medium+ Only)

2.1 Confirmed Matches — Both Sides Agree

| ProjectDiscovery ID | BackBox AI ID | Finding | PD Severity | BackBox AI Severity | Alignment |

|---|---|---|---|---|---|

| MED-001 | V-06 | Password Hash Exposure in API Responses | HIGH | HIGH (Critical via chain) | Confirmed. BackBox AI rates impact higher due to chainability with BOLA (CVSS 9.5+ compound risk). |

| MED-002 | V-04 | Privilege Escalation via Mass Assignment (Role) | HIGH | HIGH | Confirmed. Near-exact match. |

| MED-003 | V-04 + V-09 + V-10 + V-14 | Mass Assignment Across All API Endpoints | HIGH | HIGH (distributed) | Partial match. PD captures this as one systemic finding. BackBox AI decomposes into endpoint-specific instances across multiple findings. |

| MED-004 | V-01 + V-02 + V-05 | BOLA / IDOR — No Ownership Verification | HIGH | HIGH | Confirmed. Same vulnerability class. BackBox AI decomposes into 3 endpoint-specific findings; PD consolidates into 1. |

| MED-006 | V-13 | Search API Exposes Data Without Role Restriction | MEDIUM | MEDIUM | Confirmed. Exact match. |

2.2 Discrepancy — ProjectDiscovery Valid, BackBox AI Did Not Report

| ProjectDiscovery ID | Finding | PD Severity | BackBox AI Status | Analysis |

|---|---|---|---|---|

| MED-005 | Middleware Only Protects Dashboard Routes — No Defense-in-Depth for API | HIGH | Not reported as distinct finding | BackBox AI's BOLA findings implicitly validated this (API endpoints lack authorization), but an architectural finding about defense-in-depth failure is qualitatively different from individual endpoint vulnerabilities. This is a genuine analytical gap — BackBox AI identified the instances but missed the systemic pattern. |

| MED-033 | Nurse Creates Prescriptions — Privilege Escalation | HIGH | Not reported | Genuine miss. The NURSE role can access POST /api/prescriptions, which should be restricted to DOCTOR. This is a business logic / role boundary violation that requires understanding the domain constraint. |

2.3 Critical Discrepancy — ProjectDiscovery Marked as FALSE POSITIVE, BackBox AI Validated

| ProjectDiscovery ID | BackBox AI ID | Finding | PD Status | BackBox AI Status | Analysis |

|---|---|---|---|---|---|

| MED-026 | V-03 | Audit Log Forgery — Any Authenticated User Can Write Logs | FALSE POSITIVE | HIGH (Validated) | Major discrepancy. Both Neo and Claude (PD's own tools) independently detected this as TRUE, but PD's human reviewers classified it as a false positive. BackBox AI independently validated it with runtime evidence: Patient POST /api/audit-logs returns 201, forged entries visible in admin audit logs. Source code confirms no role restriction on the endpoint. Without access to PD's full review rationale, the reason for this dismissal cannot be determined. |

| MED-025 | — | Notification Injection via Arbitrary User Targeting | FALSE POSITIVE | Partially covered (V-07) | Both Neo and Claude found TRUE. PD marked FP. BackBox AI partially captures this via V-07 (Stored XSS in Notifications), but did not call out the arbitrary user targeting aspect as a separate concern. |

| MED-027 | V-17 | Hardcoded Demo Credentials in Client-Side Code | FALSE POSITIVE | LOW (Validated) | Neo, Claude, and Snyk all found TRUE. PD marked FP. BackBox AI validated as V-17 (Low severity). Below comparison threshold but worth noting. |

| MED-031 | V-15 | Stale Demo Share Token in Seed Data | FALSE POSITIVE | MEDIUM (Validated) | BackBox AI validated the hardcoded seed token and demonstrated expiry manipulation to 2099 via V-15 (Predictable Share Link Token). |

2.4 Findings Unique to BackBox AI (No ProjectDiscovery Equivalent)

| BackBox AI ID | Finding | Severity | Notes |

|---|---|---|---|

| V-02 | BOLA on Appointments (Delete/Modify) | HIGH | Subset of PD's MED-004 but with distinct DELETE/PATCH evidence. |

| V-05 | BOLA on Referrals (No Auth Check) | HIGH | Not separately identified by PD. Any role can view any referral. |

| V-07 | Stored XSS in Messages/Notifications/Prescriptions | MEDIUM | Not reported by PD. Unsanitized HTML stored in multiple endpoints. Downgraded due to Next.js auto-escaping. |

| V-08 | No Rate Limiting on Login | HIGH | PD has MED-007 (same finding, rated LOW). BackBox AI rates HIGH due to brute-force feasibility in a healthcare context. |

| V-09 | Share Link Ownership Bypass | HIGH | No PD equivalent. Any patient can modify any other patient's share links. |

| V-10 | Lab Result Falsification | HIGH | No PD equivalent. Lab techs can modify any lab result values without audit. Clinically significant. |

| V-11 | No File Type Validation on Upload | HIGH | No PD equivalent. Upload endpoint accepts arbitrary file types. |

| V-12 | Missing Security Headers | MEDIUM | PD has MED-008 (LOW) + MED-015/MED-016 (INFO). BackBox AI consolidates and rates higher. |

| V-14 | Message Content Tampering | MEDIUM | No PD equivalent. Messages mutable after creation. |

| V-15 | Predictable Share Link Token | MEDIUM | PD has MED-031 marked as FP. BackBox AI validated. |

3. Summary Comparison Table (Normalized by Vulnerability Class)

| Vulnerability Class | ProjectDiscovery | BackBox AI | Assessment |

|---|---|---|---|

| BOLA / IDOR | 1 finding — HIGH | 1 finding (consolidated) — HIGH | Both detected. BackBox AI provides endpoint-level granularity. |

| Mass Assignment / Mutable State | 2 findings — HIGH | 2-3 findings — HIGH | Roughly equivalent coverage. |

| Password Hash Exposure | 1 finding — HIGH | 1 finding — HIGH (Critical via chain) | Aligned. BackBox AI identifies compounding chain. |

| Search Data Disclosure | 1 finding — MEDIUM | 1 finding — MEDIUM | Exact match. |

| Authorization Architecture Gap | 1 finding — HIGH | Not reported | PD advantage. |

| Role Boundary Violation | 1 finding — HIGH | Not reported | PD advantage (nurse prescriptions). |

| Audit Log Integrity | Dismissed (FP) | 1 finding — HIGH | BackBox AI advantage. Independently validated. |

| Stored XSS | Not reported | 1 finding — MEDIUM | BackBox AI advantage. |

| Rate Limiting | 1 finding — LOW | 1 finding — HIGH | Both found. BackBox AI rates higher. |

| Security Headers | 1 LOW + 2 INFO | 1 finding — MEDIUM | Both found. BackBox AI rates higher. |

| Share Link Vulnerabilities | Dismissed (FP) | 2 findings — HIGH + MEDIUM | BackBox AI advantage. Validated what PD dismissed. |

| Lab Result Integrity | Not reported | 1 finding — HIGH | BackBox AI advantage. Clinically significant. |

| File Upload Validation | Not reported | 1 finding — HIGH | BackBox AI advantage. |

| Message Integrity | Not reported | 1 finding — MEDIUM | BackBox AI advantage. |

| Distinct Vuln Classes (Medium+) | ~5-6 | ~11 | BackBox AI demonstrates broader class coverage. |

4. Severity Comparison

A notable pattern: BackBox AI systematically rates findings higher than ProjectDiscovery. This reflects a different risk assessment philosophy — BackBox AI evaluates findings in the context of a healthcare application handling Protected Health Information (PHI), where the business impact of data exposure or manipulation is amplified by HIPAA compliance requirements and patient safety considerations.

| Finding | PD Severity | BackBox AI Severity | Rationale for Difference |

|---|---|---|---|

| BOLA / IDOR | HIGH | HIGH | Aligned. |

| Rate Limiting | LOW | HIGH | Healthcare context: brute-force → PHI access = higher impact. |

| Security Headers | LOW / INFO | MEDIUM | Defense-in-depth for PHI-protecting application. |

| Audit Log Forgery | FP (dismissed) | HIGH | Audit integrity is critical for HIPAA §164.312. |

| Share Link Issues | FP (dismissed) | HIGH / MEDIUM | PHI sharing links with no ownership check. |

5. Chained Vulnerability Analysis — The CVSS 9.5+ Scenario

BackBox AI's most significant contribution is the identification of compounding attack chains:

Chain: Credential Compromise Pipeline (CVSS 9.5+)

V-08 (No rate limit) → V-01 (BOLA) → V-06 (Hash exposure) → Offline cracking

- Brute-force login (no rate limiting)

- Use BOLA to read any patient/doctor/user record

- Harvest bcrypt password hashes from API responses

- Crack hashes offline, compromising all accounts

This chain represents a genuinely Critical compounded risk (CVSS 9.5+) that is only visible when findings are analyzed holistically rather than individually. PD's study did not identify compounding chains.

6. False Positive / False Negative Analysis

BackBox AI Gaps (False Negatives)

| Finding | PD Severity | Nature of Gap |

|---|---|---|

| MED-033: Nurse Creates Prescriptions | HIGH | Genuine miss. Business logic / role boundary violation requiring domain understanding. |

| MED-005: Middleware Architecture Gap | HIGH | Individual instances found (BOLA endpoints) but systemic pattern not identified as distinct architectural finding. |

ProjectDiscovery Potentially Incorrect Dismissals

| Finding | PD Status | BackBox AI Validation | Assessment |

|---|---|---|---|

| MED-026: Audit Log Forgery | FALSE POSITIVE | HIGH — validated with runtime evidence + source code | BackBox AI independently confirmed. Both Neo and Claude also detected TRUE. PD's reasoning for dismissal is not documented in published data. |

| MED-025: Notification Injection | FALSE POSITIVE | Partially validated via V-07 | Neo and Claude also detected TRUE. |

| MED-027: Hardcoded Credentials | FALSE POSITIVE | LOW — validated as V-17 | Neo, Claude, and Snyk all detected TRUE. |

| MED-031: Stale Share Token | FALSE POSITIVE | MEDIUM — validated as V-15 | Hardcoded seed token + expiry manipulation confirmed. |

BackBox AI False Positives

None identified. All 17 findings were validated with runtime evidence and confirmed by expert review. Note: this is a self-assessment; independent third-party validation was not performed.

7. Context and Limitations

Vendor self-comparison: This analysis was produced by or for BackBox AI, comparing its own product against tools evaluated in a third-party study. This represents an inherent conflict of interest.

Asymmetric methodology: BackBox AI (AI-powered white-box analysis with expert review) was tested under potentially different conditions than the tools in PD's study. Time allocated, scope definition, and tool configuration may all differ.

PD study intent: PD's "Vibe Coding" article is research into AI-assisted vulnerability discovery in AI-generated code, not a formal product benchmark. Using it as a competitive comparison point should acknowledge this context.

Raw data access: The comparison relies on PD's published summary (FINDINGS.csv), not their full methodology, tool configurations, or review criteria.

Traditional tool context: PD's data shows Snyk, Invicti, and Semgrep found zero valid Medium+ findings for MedPortal. These are SAST/DAST tools operating under different constraints than AI-powered analysis; the comparison is asymmetric by nature.

8. Key Takeaways

| # | Takeaway | Confidence |

|---|---|---|

| 1 | BackBox AI demonstrated broader vulnerability class coverage than all tools in PD's study for MedPortal (~11 vs. ~5-6 distinct vulnerability classes at Medium+ severity) | High |

| 2 | BackBox AI independently validated the audit log forgery vulnerability that PD dismissed as false positive, with runtime evidence and source code analysis | High |

| 3 | BackBox AI identified a compounding attack chain (BOLA + hash exposure + brute-force = CVSS 9.5+) not identified by any tool in PD's study | High |

| 4 | BackBox AI missed one business logic violation (nurse creating prescriptions) that PD's tools identified | Confirmed |

| 5 | Traditional SAST/DAST tools (Snyk, Invicti, Semgrep) found zero valid Medium+ findings for MedPortal per PD's published data | Medium (attributed to PD; tool configurations unknown) |

| 6 | BackBox AI's findings include clinically significant vulnerabilities (lab result falsification, share link PHI exposure) not identified by any tool in PD's study | High |

9. Conclusion

BackBox AI demonstrates meaningfully broader vulnerability coverage than all tools evaluated in ProjectDiscovery's "Vibe Coding" study for the MedPortal application. When findings are normalized by vulnerability class, BackBox AI covers approximately twice as many distinct vulnerability categories at Medium+ severity. BackBox AI also provides unique value through compounding chain analysis and identifies clinically significant vulnerabilities in a healthcare context that other tools did not surface.

The most notable strength is the independent validation of the audit log forgery vulnerability (dismissed as FP by PD but confirmed by BackBox AI with runtime evidence). The most notable gap is the missed nurse-to-prescription privilege escalation (MED-033), a business logic boundary violation requiring domain-specific understanding.

All findings and conclusions in this report are derived from the sources cited. No external data or assumptions beyond the published evidence have been introduced.

Source (ProjectDiscovery):

https://github.com/projectdiscovery/research/tree/main/vibe-coding/FINDINGS.csv